AWS Local Zones are now available in four new metro areas—Buenos Aires, Copenhagen, Helsinki, and Muscat. You can now use these Local Zones to deliver applications that require single-digit millisecond latency or local data processing.

Tag Archives: AWS

AWS Regions

AWS Global Infrastructure at a glance!

With a new region launched in Switzerland 🇨🇭 last week, AWS now have 28 active Regions and 90 Availability Zones! Let’s break down what it actually means ⬇️

𝐖𝐡𝐚𝐭 𝐢𝐬 𝐀𝐖𝐒 𝐑𝐞𝐠𝐢𝐨𝐧? It is a separate geographic area that consists of multiple separate Availability Zones (AZ). AWS offers Regions with a multiple AZ design.

𝐖𝐡𝐚𝐭 𝐢𝐬 𝐀𝐯𝐚𝐢𝐥𝐚𝐛𝐢𝐥𝐢𝐭𝐲 𝐙𝐨𝐧𝐞? An Availability Zone (AZ) consists of one or more data centers at a location within an AWS Region. All AZs in an AWS Region are connected through redundant, ultra-low-latency networks.

𝐖𝐡𝐚𝐭 𝐢𝐬 𝐚 𝐃𝐚𝐭𝐚 𝐂𝐞𝐧𝐭𝐞𝐫? A Data Center (DC) is a physical facility that houses all the necessary IT equipment, such as servers, storage, network systems, routers, firewalls, etc. Many Data Centers -> AZ, Many AZs-> Region, Many Regions -> AWS Global Infrastructure! Image credit: AWSGeek

The Amazon Distributed Computing Manifesto

Back in 1998, a group of senior engineers at Amazon wrote the Distributed Computing Manifesto, an internal document that would go on to influence the next two decades of system and architecture design at Amazon.

Source (Werner Vogels): https://www.allthingsdistributed.com/2022/11/amazon-1998-distributed-computing-manifesto.html

List AWS Security Groups open to 0.0.0.0/0

We all now that opening security groups to the world is a bad practice, right? Maybe we can audit these running some queries like the following:

Inbound:

$ aws ec2 –region us-west-2 describe-security-groups –filter Name=ip-permission.cidr,Values=’0.0.0.0/0′ –query “SecurityGroups[*].{Name:GroupName,ID:GroupId}” –output table

| DescribeSecurityGroups |

+-----------------------+-------------------+

| ID | Name |

+-----------------------+-------------------+

| sg-0b977c7003c7b280 | launch-wizard-2 |

+-----------------------+-------------------+Outbound:

$ aws ec2 –region us-west-2 describe-security-groups –filter Name=egress.ip-permission.cidr,Values=’0.0.0.0/0′ –query “SecurityGroups[*].{Name:GroupName,ID:GroupId}” –output table

| DescribeSecurityGroups |

+-----------------------+-------------------------------------------------+

| ID | Name |

+-----------------------+-------------------------------------------------+

| sg-61de04 | vivXXXX_XX_US_West |

| sg-9eefe3 | CentOS 6 |

| sg-9479a0 | default |

| sg-e6b0a4 | ElasticMapReduce-master |

| sg-077c79703c7b280 | launch-wizard-2 |

| sg-b24375 | default |

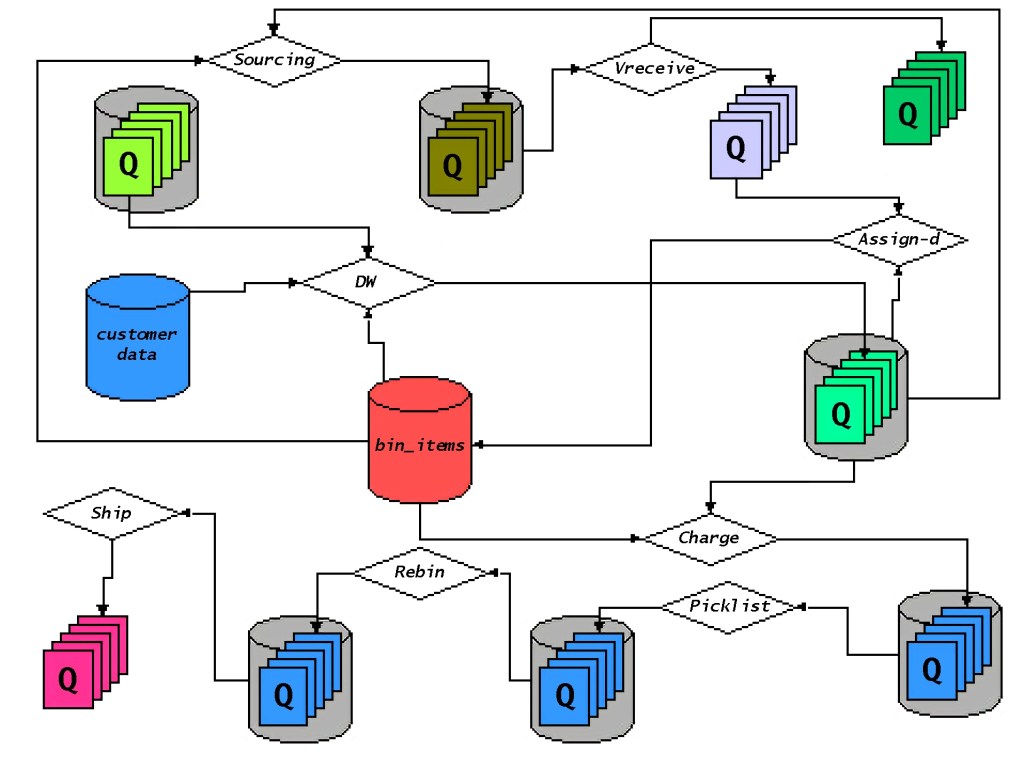

+-----------------------+-------------------------------------------------+EBS Storage Performance Notes – Instance throughput vs Volume throughput

I just wanted to write a couple lines/guidance on this regard as this is a recurring question when configuring storage, not only in the cloud, but can also happen on bare metal servers.

What is throughput on a volume?

Throughput is the measure of the amount of data transferred from/to a storage device per time unit (typically seconds).

The throughput consumed on a volume is calculated using this formula:

IOPS (IO Ops per second) x BS (block size)= Throughput

As example, if we are writing at 1200 Ops/Sec, and the chunk write size is around 125Kb, we will have a total throughput of about 150Mb/sec.

Why is this important?

This is important because we have to be aware of the Maximum Total Throughput Capacity for a specific volume vs the Maximum Total Instance Throughput.

Because, if your instance type (or server) is able to produce a throughput of 1250MiB/s (i.e M4.16xl)) and your EBS Maximum Throughput is 500MiB/s (i.e. ST1), not only you will hit a bottleneck trying to write to the specific volumes, but also throttling might occur (i.e. EBS on cloud services).

How do I find what is the Maximum throughput for EC2 instances and EBS volumes?

Here is documentation about Maximum Instance Throughput for every instance type on EC2: https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/ebs-optimized.html

And here about the EBS Maximum Volume throughput: https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/ebs-volume-types.html

How do I solve the problem ?

If we have an instance/server that has more throughput capabilities than the volume, just add or split the storage capacity into more volumes. So the load/throughput will be distributed across the volumes.

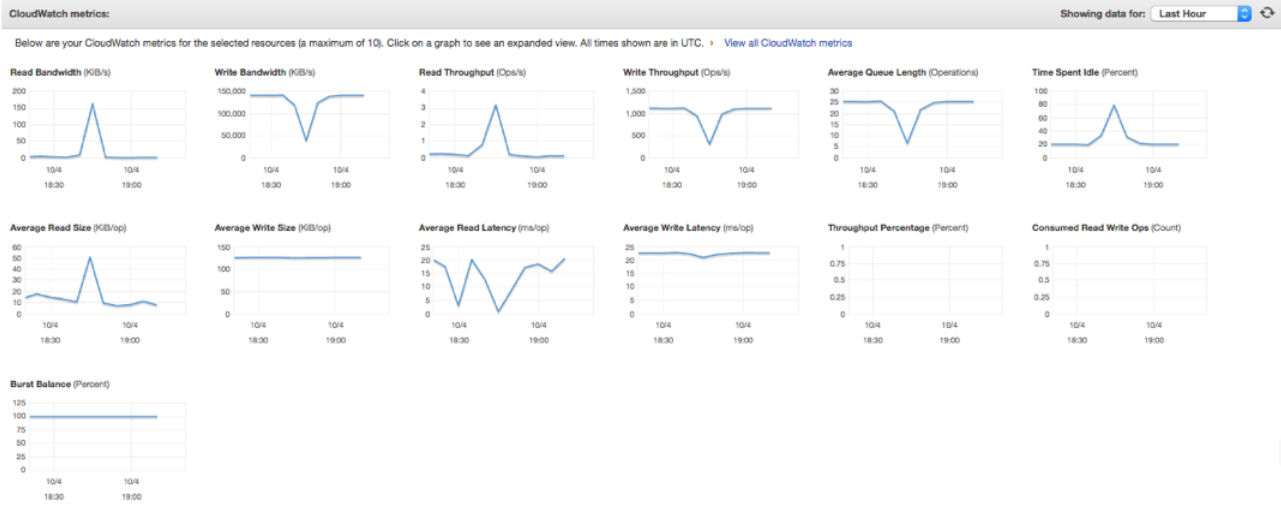

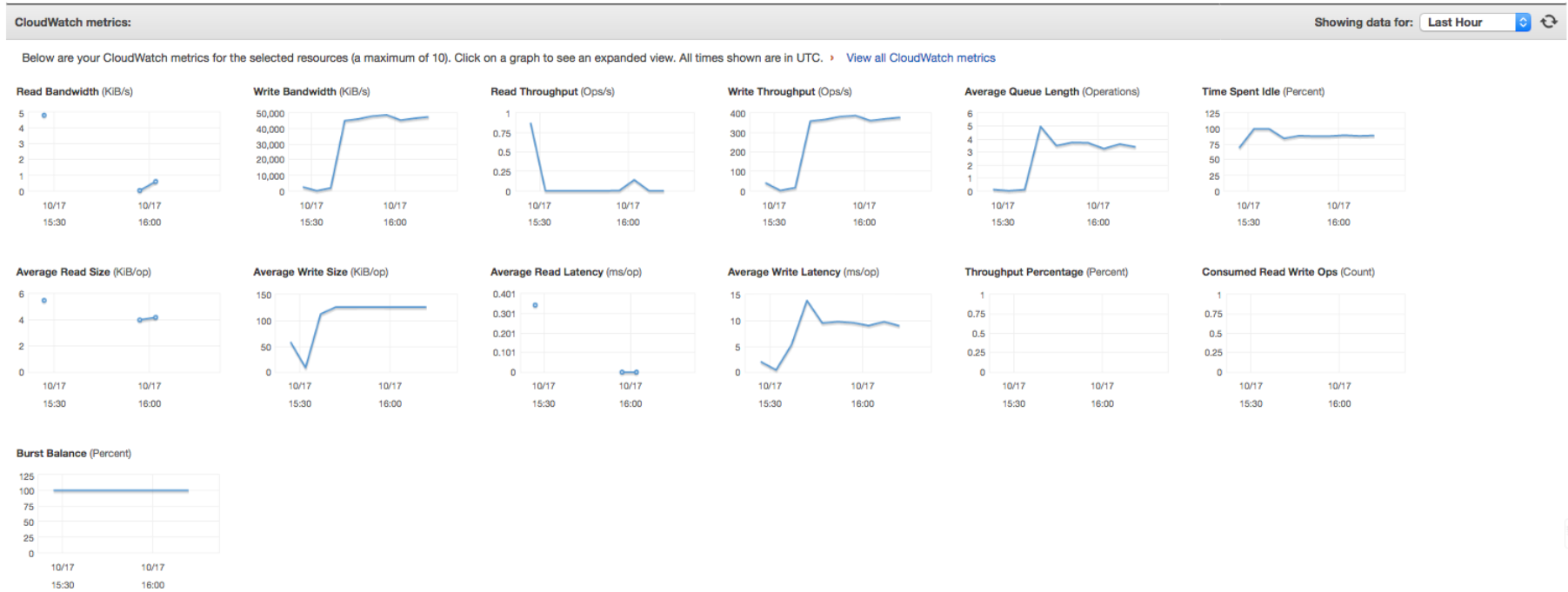

As an example, here are some metrics with different volume configurations:

1 x 3000GB – 9000IOPS volume:

3 x 1000GB – 3000IOPS volume:

Look at some of the metrics: these are using the same instance type (m4.10xl – 500Mb/s throughput), same volume type (GP2 – 160Mb/s throughput) and running the same job:

- Using 1 volume, Write/Read Latency is around 20-25 ms/op. This value is high compared to 3x1000GB volumes.

- Using 1 volume, Avg Queue length 25. The queue depth is the number of pending I/O requests from your application to your volume. For maximum consistency, a Provisioned IOPS volume must maintain an average queue depth (rounded to the nearest whole number) of one for every 500 provisioned IOPS in a minute. On this scenario 9000/500=18. Queue length of 18 or higher will be needed to reach 9000 IOPS.

- Burst Balance is 100%, which is Ok, but if this balance drops to zero (it will happen if volume capacity keeps being exceeded), all the requests will be throttled and you’ll start seeing IO errors.

- On both scenarios, Avg Write Size is pretty large (around 125KiB/op) which will typically cause the volume to hit the throughput limit before hitting the IOPS limit.

- Using 1 volume, Write throughput is around 1200 Ops/Sec. Having write size around 125Kb, it will consume about 150Mb/sec. (IOPS x BS = Throughput)

Getting latest EMR release label

Usually latest release label gets updated on EMR’s Whats New page.

So a way to getting the last EMR release label would be:

curl -s https://docs.aws.amazon.com/emr/latest/ReleaseGuide/emr-whatsnew.html |grep "(Latest)"|head -n1|awk '{ print $3 }'

Have fun!

AWS S3 API – Throughput Notes

Some notes on settings to maximize throughput and increase parallelism while using S3 API:

aws configure set default.s3.max_concurrent_requests 20 aws configure set default.s3.max_queue_size 10000 aws configure set default.s3.multipart_threshold 64MB aws configure set default.s3.multipart_chunksize 16MB aws configure set default.s3.max_bandwidth 50MB/s aws configure set default.s3.use_accelerate_endpoint true aws configure set default.s3.addressing_style path

max_concurrent_requests

Default – 10

The aws s3 transfer commands are multithreaded. At any given time, multiple requests to Amazon S3 are in flight. For example, if you are uploading a directory via aws s3 cp localdir s3://bucket/ --recursive, the AWS CLI could be uploading the local files localdir/file1, localdir/file2, and localdir/file3 in parallel. The max_concurrent_requests specifies the maximum number of transfer commands that are allowed at any given time.

You may need to change this value for a few reasons:

- Decreasing this value – On some environments, the default of 10 concurrent requests can overwhelm a system. This may cause connection timeouts or slow the responsiveness of the system. Lowering this value will make the S3 transfer commands less resource intensive. The tradeoff is that S3 transfers may take longer to complete. Lowering this value may be necessary if using a tool such as trickle to limit bandwidth.

- Increasing this value – In some scenarios, you may want the S3 transfers to complete as quickly as possible, using as much network bandwidth as necessary. In this scenario, the default number of concurrent requests may not be sufficient to utilize all the network bandwidth available. Increasing this value may improve the time it takes to complete an S3 transfer.

max_queue_size

Default – 1000

The AWS CLI internally uses a producer consumer model, where we queue up S3 tasks that are then executed by consumers, which in this case utilize a bound thread pool, controlled by max_concurrent_requests. A task generally maps to a single S3 operation. For example, as task could be a PutObjectTask, or a GetObjectTask, or an UploadPartTask. The enqueuing rate can be much faster than the rate at which consumers are executing tasks. To avoid unbounded growth, the task queue size is capped to a specific size. This configuration value changes the value of that maximum number.

You generally will not need to change this value. This value also corresponds to the number of tasks we are aware of that need to be executed. This means that by default we can only see 1000 tasks ahead. Until the S3 command knows the total number of tasks executed, the progress line will show a total of .... Increasing this value means that we will be able to more quickly know the total number of tasks needed, assuming that the enqueuing rate is quicker than the rate of task consumption. The tradeoff is that a larger max queue size will require more memory.

multipart_threshold

Default – 8MB

When uploading, downloading, or copying a file, the S3 commands will switch to multipart operations if the file reaches a given size threshold. The multipart_threshold controls this value. You can specify this value in one of two ways:

- The file size in bytes. For example, 1048576.

- The file size with a size suffix. You can use KB, MB, GB, TB. For example: 10MB, 1GB. Note that S3 imposes constraints on valid values that can be used for multipart operations.

multipart_chunksize

Default – 8MB

Minimum For Uploads – 5MB

Once the S3 commands have decided to use multipart operations, the file is divided into chunks. This configuration option specifies what the chunk size (also referred to as the part size) should be. This value can specified using the same semantics as multipart_threshold, that is either as the number of bytes as an integer, or using a size suffix.

max_bandwidth

Default – None

This controls the maximum bandwidth that the S3 commands will utilize when streaming content data to and from S3. Thus, this value only applies for uploads and downloads. It does not apply to copies nor deletes because those data transfers take place server side. The value is in terms of bytes per second. The value can be specified as:

- An integer. For example, 1048576 would set the maximum bandwidth usage to 1 megabyte per second.

- A rate suffix. You can specify rate suffixes using: KB/s, MB/s, GB/s, etc. For example: 300KB/s, 10MB/s.

In general, it is recommended to first use max_concurrent_requests to lower transfers to the desired bandwidth consumption. The max_bandwidth setting should then be used to further limit bandwidth consumption if setting max_concurrent_requests is unable to lower bandwidth consumption to the desired rate. This is recommended because max_concurrent_requests controls how many threads are currently running. So if a high max_concurrent_requests value is set and a low max_bandwidth value is set, it may result in threads having to wait unneccessarily which can lead to excess resource consumption and connection timeouts.

use_accelerate_endpoint

Default – false

If set to true, will direct all Amazon S3 requests to the S3 Accelerate endpoint: s3-accelerate.amazonaws.com. To use this endpoint, your bucket must be enabled to use S3 Accelerate. All request will be sent using the virtual style of bucket addressing: my-bucket.s3-accelerate.amazonaws.com. Any ListBuckets, CreateBucket, and DeleteBucket requests will not be sent to the Accelerate endpoint as the endpoint does not support those operations. This behavior can also be set if --endpoint-url parameter is set to https://s3-accelerate.amazonaws.com or http://s3-accelerate.amazonaws.com for any s3 or s3api command. This option is mutually exclusive with the use_dualstack_endpoint option.

use_dualstack_endpoint

Default – false

If set to true, will direct all Amazon S3 requests to the dual IPv4 / IPv6 endpoint for the configured region. This option is mutually exclusive with the use_accelerate_endpoint option.

addressing_style

Default – auto

There’s two styles of constructing an S3 endpoint. The first is with the bucket included as part of the hostname. This corresponds to the addressing style of virtual. The second is with the bucket included as part of the path of the URI, corresponding to the addressing style of path. The default value in the CLI is to use auto, which will attempt to use virtual where possible, but will fall back to path style if necessary. For example, if your bucket name is not DNS compatible, the bucket name cannot be part of the hostname and must be in the path. With auto, the CLI will detect this condition and automatically switch to path style for you. If you set the addressing style to path, you must ensure that the AWS region you configured in the AWS CLI matches the same region of your bucket.

payload_signing_enabled

If set to true, s3 payloads will receive additional content validation in the form of a SHA256 checksum which will be calculated for you and included in the request signature. If set to false, the checksum will not be calculated. Disabling this can be useful to save the performance overhead that the checksum calculation would otherwise cause.

By default, this is disabled for streaming uploads (UploadPart and PutObject), but only if a ContentMD5 is present (it is generated by default) and the endpoint uses HTTPS.

Source: https://docs.aws.amazon.com/cli/latest/topic/s3-config.html

AWS EMR – Big Data in Strata New York

Will you be in New York next week (Sept 25th – Sept 28th)?

![]()

![]()

Come meet the AWS Big Data team at Strata Data Conference, where we’ll be happy to answer your questions, hear about your requirements, and help you with your big data initiatives.

See you there!